We’ve heard about data lakes and their importance, but we rarely talk about a testing results data lake and how it can help us. Companies have always struggled with not having a single place to hold all testing results and related data. All data related to testing are mostly scattered, hard to merge and move around, and have proven to be one of the biggest barriers for successful testing.

We’ve seen in companies where, for an instance, the automation testing team has its way of storing, managing, and maintaining their results data. Similarly, performance testing, security testing, and other types of testing have their own ways. We see this trend across teams, companies, and their respective industries.

As an example, on the performance testing and engineering, the load testing tools would have their own way of storing data – profiling and analysis tools would have their own way of storing data. Because of this, it’s highly challenging to port and move around the data from one store to another. There isn’t any simpler way to connect these different data stores to provide flexibility and power to the testing team, a community across the organizations.

Data is the new oil today, and the benefits equally apply to the testing domain as well.

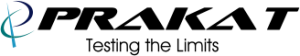

A data lake is a centralized repository that is created and used to store all the structured and unstructured data. A testing data lake can be formed by pushing all the various test results and related data into a centralized repository that can store the data – for example, AWS S3, which is an object storage service.

Creating a Testing Data Lake

Consider a company that has a testing team named “TCOE”. “TCOE” has been conducting automation testing, performance testing, exploratory testing, security testing, and accessibility testing across their products.

“TCOE,” after they execute performance test runs, would push all test results and data into a data lake they have created. They can store all the data as a parquet file or XML file or JSON file or any other format before it gets pushed to a data lake. The files could store response times, hits per second, errors, CPU, memory utilization as XML/Parquet files, and push it to a data lake.

Automation testing results like automation script pass/fail, validations, screenshots, errors, and other details can be pushed to the data lake as well.

Similarly, other types of testing related data can be pushed to the data lake.

One can choose the technology and service on how to store, construct and push to data lake based on their requirements. Similarly, we can choose technology, service and cloud/on-premises for building the data lake based on specific requirements.

There is a plethora of options to choose from and build the entire ecosystem.

Benefits of Building a Data Lake

Live feed of the overall quality of the product:

if a product is getting ready for the release, a live running dashboard built out of the data lake can provide every second of the overall quality, how a product is doing with performance, security, accessibility, and other testing.

Information segmentation:

If there are 50 different products, a data lake can merge the overall quality of the company breakdown by product, break down by testing types. The live dashboard can be so flexible that it can meet the needs of different teams and people across the organization. For example, a CEO wanting to get a holistic view of the quality of all products to an engineer wanting to look at a specific defect detail.

- Tools, framework, reports that we use across various testing (performance, automation, security, and others) are all different. Companies have always struggled to merge results, reports, and provide a unique single view of the quality. Data lake can help address this challenge.

- Data lake can provide complete flexibility to build any kind of visualization, dashboards reports, metrics.

- Perform predictive analysis, real-time analytics, etc.

- Build AI solutions and ML models to make better decisions.

- Foster innovation around testing, quality.

- Drive product overall success.

How Testing Teams Can Benefits from a Data Lake

- Automatically provide details on where the focus should and should not be for testing the current release and what kinds of tests are beneficial.

- Provide inputs into what tests / other reasons are missing to improve the quality of a product.

- If the quality across products is deteriorating, it can automatically point why, when, how, and where.

- Can help quickly identify duplicate defects, issues.

- It can learn, create vital trends for products success.

- Predictive analysis of how a performance defect/issue can affect the complete system and quality.

- Enhance test coverage.

- Identify what are the critical areas of focus for testing based on the historical data.

- Analyze the impact of defects and how it can affect product and customer success.

- Early detection of failures in the product.

- Help plan preventive actions.

- Predict the probability of finding defects in a feature of a product.

- Predict risks for each feature delivered.

- Help drive, optimize all testing efforts.

- Improve testing process.

- To highlight the impact and areas of impact when a module gets updated.

- Improvement and recommendations for the test data to be used and what would likely contribute to efficient testing.

- Improve the regression suite by identifying and including the efficient business cases/scenarios that need to be tested.

- Automatically create test cases based on patterns.

- Create a risk-based testing strategy.

- Identify, provide insights into test suite’s efficiency and help improvise them.

- Save effort and cost with the overall testing over a period.

- Identify, remove duplicate and redundant test cases and efforts.

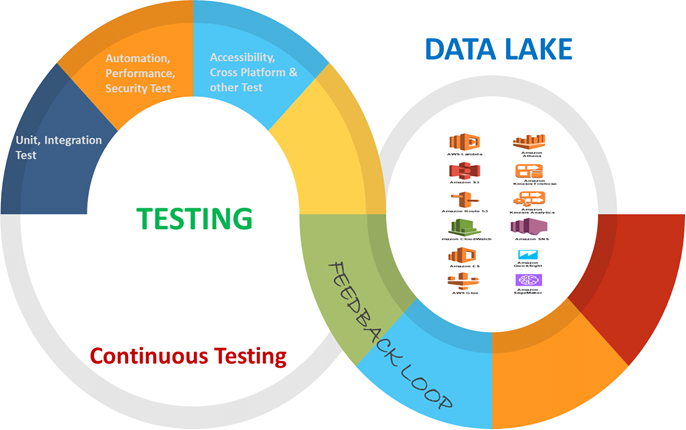

The whole idea of a testing data lake is to push all test results and test-related data into a data lake.

The data could be across:

- Multiple products.

- Multiple test types (for example performance testing, automation testing, security testing, functional testing, and other testing types).

- It could be everything and anything related to testing across the company.

The above image depicts how we could have an open-sourced testing data lake where companies across the industry can contribute and leverage.

Future of Testing Data Lakes

Companies can also look at building a data lake from the production data. They can integrate the production data lake and testing data lake to build a robust solution that can provide insight into a lot of things.

Common Advantages

- What is being tested in RandD Vs what is being tested by actual end-users in production? This would help test-teams identify where the gaps are with testing and how to fix them.

- Realistic cross-browser, platform testing requirements can be identified.

- Most heavily used business-critical scenarios and other performance testing requirements can be derived.

- Accessibility, Automation, functional, mobile testing requirements can be derived and made better.

- ML/AI-solutions results can be built to compare testing and production data lake to analyze the predictions and better the models.

- Learn from a Production data lake.

- Improvise Shift left, testing.

- Improve product success and customer experience.

- Higher Product quality.

Typical Options

We could have an open-source testing data lake where companies can come subscribe, retrieve, and make use of these data for the larger benefit of the software industry. We could also have an open-source data model where APIs can be made visible to companies across the industry to contribute and leverage equally.

Having an open-source data lake has benefits like identifying similar pattern and issues. Additionally, we can create the testing data lake using different technologies. One such example is using AWS S3 to create a data lake and using other AWS services like Kinesis, Athena, and Quick Sight to build dashboard and analytics. Similarly, we can do predictive analytics and machine learning using AWS Deep learning and Sage Maker.

The other way is to use Sumologic, Jenkins, Grafana, and a combination of other tools. There are many other ways to build this. Teams must determine the best way to build based on the specialized needs and requirements of their organization(s).

Building and owning a testing data lake is becoming increasingly important for companies to succeed and they need to begin considering laying out a foundation to have this in place. There are a lot of advantages and benefits to building this for testing teams across the industry; the list is infinite!

Connect with Prakat.

![]()

Modernize and transform how you do business in the digital age. The Prakat team is ready to help you get started.